Character.AI Replaces Open Chats with ‘Stories’ for Underage Users

Facing significant legal pressure over its impact on teen mental health, AI chatbot platform Character.AI has implemented a new policy: underage users will now be restricted from open-ended chats. Instead, they will be directed to a new feature called ‘Stories’, which offers structured, choose-your-own-adventure-style interactions with AI characters.

This move comes as the company grapples with multiple lawsuits alleging that its platform contributes to negative mental health outcomes for teenagers, including a case linked to a user’s death by suicide.

Key Takeaways:

- Character.AI is banning underage users from open-ended chats.

- A new ‘Stories’ feature offers guided, choice-based narratives.

- The change addresses ongoing lawsuits concerning teen mental health.

- An age assurance feature is planned for future implementation.

Understanding the ‘Stories’ Feature

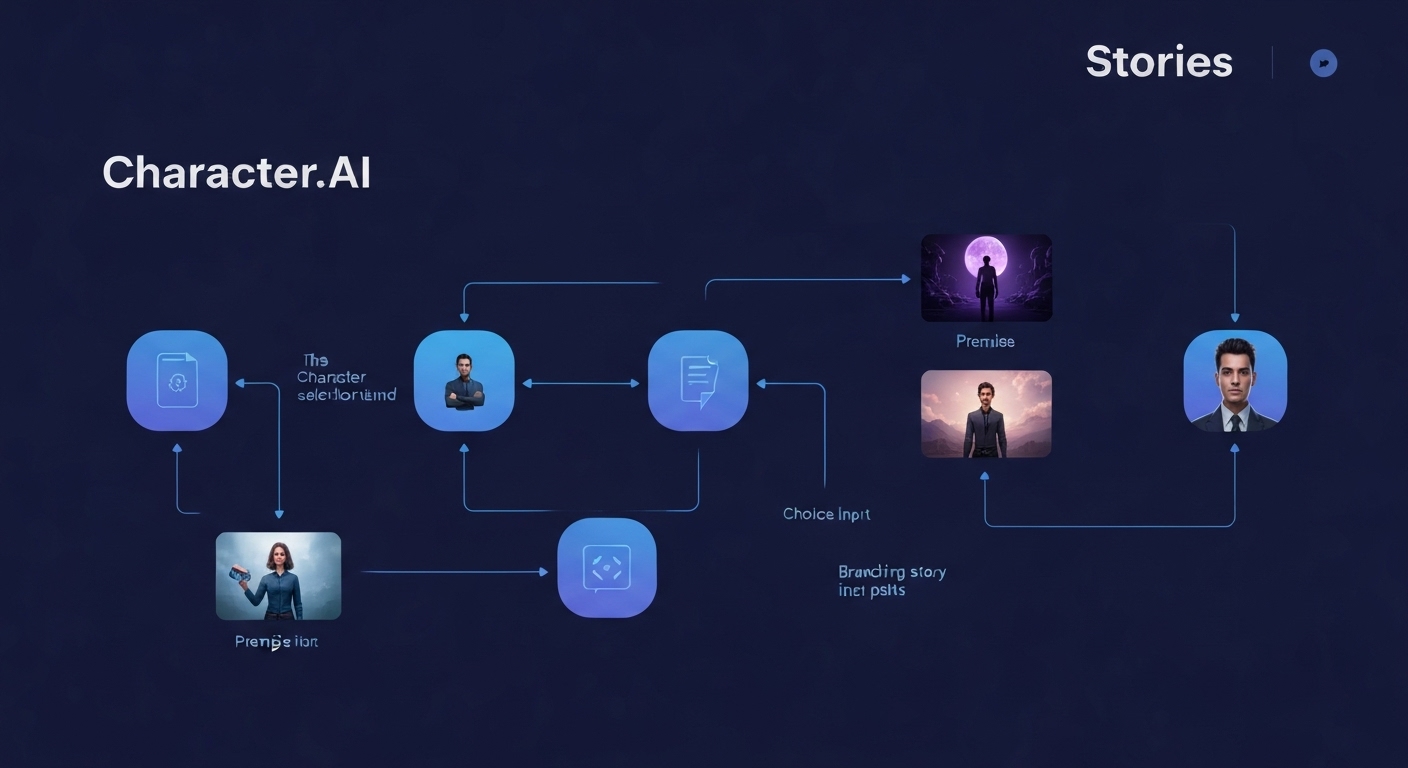

The ‘Stories’ format allows users to select AI characters, choose a genre, and either create their own premise or have one generated by AI. Character.AI then crafts a guided narrative where user choices dynamically alter the story’s progression. The feature currently includes AI-generated images, with plans for richer multimodal elements in the future.

While available to all users, Character.AI is specifically promoting ‘Stories’ as an enhanced experience for those under 18. This follows an earlier announcement in October detailing plans to shut down teen chats on November 25th to develop an age assurance system that would automatically direct younger users to more ‘conservative’ AI interactions.

Legal Battles and Mental Health Concerns

Character.AI is currently involved in litigation that includes accusations of contributing to a teenager’s death by suicide. Other lawsuits claim that conversations with AI characters on the platform have negatively impacted teens’ mental well-being. These legal challenges appear to be a primary driver behind the platform’s recent policy changes.

Editor’s Take: A Cautious Step Forward

Character.AI’s introduction of ‘Stories’ is a clear response to mounting legal and ethical scrutiny. While offering a more controlled environment might mitigate some risks, it raises questions about censorship and the platform’s long-term commitment to user safety versus unfettered AI interaction. The move to ‘structured’ narratives could stifle the very creativity and open-ended exploration that made AI chatbots appealing to many users in the first place.

The company’s plan to implement age assurance features is crucial, but the effectiveness and privacy implications of such systems remain to be seen. For now, ‘Stories’ represents a significant pivot, prioritizing risk management over the more permissive chat environment that characterized Character.AI’s early growth.

This article was based on reporting from The Verge. A huge shoutout to their team for the original coverage.

Source: Read the full story at The Verge