The Blurry vs. Fragmented Divide in AI

Artificial intelligence often grapples with two distinct approaches to understanding the world: neural networks and symbolic systems. Neural networks, like those powering image recognition and natural language processing, excel at pattern recognition but can be opaque, often described as ‘blurry’ in their internal logic. They learn from vast amounts of data but struggle to articulate explicit rules or reason symbolically.

Conversely, symbolic AI systems operate on explicit rules, logic, and discrete symbols. These systems are highly interpretable and excel at tasks requiring structured reasoning, but they can be brittle and fragmented. They often fail to generalize well to new, unseen data and struggle with the nuances and ambiguities present in the real world.

Enter Sparse Autoencoders: The Bridge Builders

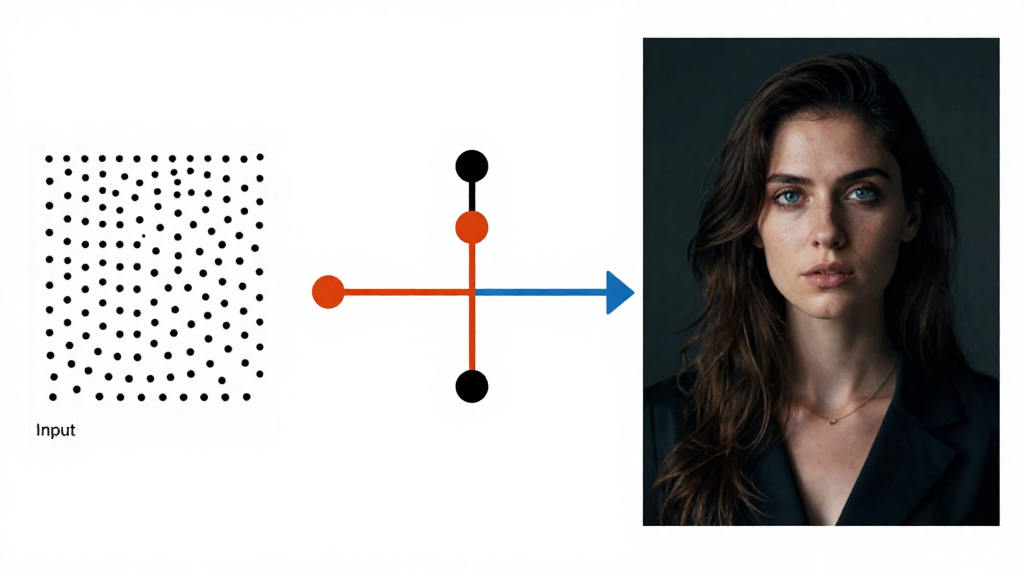

Sparse Autoencoders (SAEs) are emerging as a powerful tool to bridge this fundamental gap. SAEs are a type of neural network designed to learn efficient representations of data. The ‘sparse’ constraint encourages the network to activate only a small number of its neurons for any given input, forcing it to learn more disentangled and interpretable features.

This sparsity is key. By learning to represent data in a sparse, compressed form, SAEs can potentially capture the underlying structure that both neural and symbolic systems aim to model. They offer a way to compress information without losing critical details, making them a promising candidate for integrating the strengths of both AI paradigms.

Why This Matters: Towards More Robust AI

The ability to combine the pattern-matching prowess of neural networks with the explicit reasoning capabilities of symbolic systems is a holy grail in AI research. Such neuro-symbolic systems could lead to AI that is not only more powerful but also more understandable, reliable, and adaptable. Imagine AI that can learn from experience (neural) and then explain its reasoning through clear, logical steps (symbolic).

SAEs provide a concrete mechanism to move in this direction. By learning sparse, interpretable features from data, they can serve as a common ground or intermediate representation that both neural and symbolic components can leverage. This could unlock new levels of performance in complex tasks, from scientific discovery to advanced robotics and human-like language understanding.

This story was based on reporting from Towards Data Science. Read the full report here.